Getting scanning to work with Gimp on Trixie

Trixie ships Gimp 3.0.4 and the 3.x series has gotten incompatible to XSane, the common frontend for scanners on Linux.

Hence the maintainer, Jörg Frings-Fürst, has disabled the Gimp integration temporarily in response to a Debian bug #1088080.

There seems to be no tracking bug for getting the functionality back but people have been commenting on Debian bug #993293 as that is ... loosely related ![]() .

.

There are two options to get the Scanning functionality back in Trixie until this is properly resolved by an updated XSane in Debian (e.g. via trixie-backports):

Lee Yingtong Li (RunasSudo) has created a Python script that calls XSane as a cli application and published it at https://yingtongli.me/git/gimp-xsanecli/. This worked okish for me but needed me to find the scan in /tmp/ a number of times. This is a good stop-gap script if you need to scan from Gimp $now and look for a quick solution.

Upstream has completed the necessary steps to get XSane working as a Gimp 3.x plugin at https://gitlab.com/sane-project/frontend/xsane. Unfortunately compiling this is a bit involved but I made a version that can be dropped into /usr/local/bin or $HOME/bin and works alongside Gimp and the system-installed XSane.

So:

sudo apt install gimp xsane- Download xsane-1.0.0-fit-003 (752kB, AMD64 executable for Trixie) and place it in

/usr/local/bin(as root) sha256sum /usr/local/bin/xsane-1.0.0-fit-003

# result needs to be af04c1a83c41cd2e48e82d04b6017ee0b29d555390ca706e4603378b401e91b2sudo chmod +x /usr/local/bin/xsane-1.0.0-fit-003- # Link the executable into the Gimp plugin directory as the user running Gimp:

mkdir -p $HOME/.config/GIMP/3.0/plug-ins/xsane/

ln -s /usr/local/bin/xsane-1.0.0-fit-003 $HOME/.config/GIMP/3.0/plug-ins/xsane/xsane - Restart Gimp

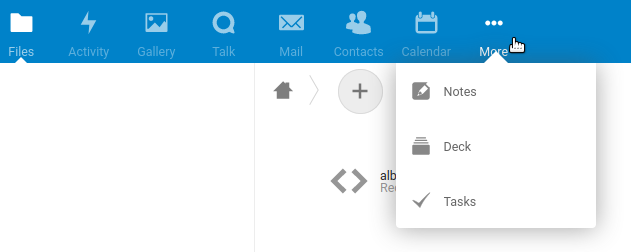

- Scan from Gimp via File → Create → Acquire → XSane

The source code for the xsane executable above is available under GPL-2 at https://gitlab.com/sane-project/frontend/xsane/-/tree/c5ac0d921606309169067041931e3b0c73436f00. This points to the last upstream commit from 27. September 2025 at the time of writing this blog article.

Debugging help

(added 06.05.2026)

As some people in the comments seem to have issues with getting the plugin to work on their systems:

Run $HOME/.config/GIMP/3.0/plug-ins/xsane/xsane --version as a shell command line.

This should output:

xsane-1.0.0 (c) 1998-2022 Oliver Rauch E-mail: Oliver.Rauch@xsane.org package xsane-1.0.0-fit-003 compiled with GTK-3.24.49 with color management function with GIMP support, compiled with GIMP-3.0.4 XSane output formats: jpeg, pdf(compr.), png, pnm, ps(compr.), tiff, txt

If it doesn't, the shell may tell you what step of the instructions you missed (e.g. the +x attribute) or the loader shows what library you are missing on your system.